I wanted to briefly add some additional thoughts as well, some considerations of my own, while we wait for the official documentation.

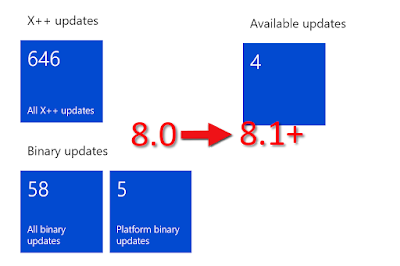

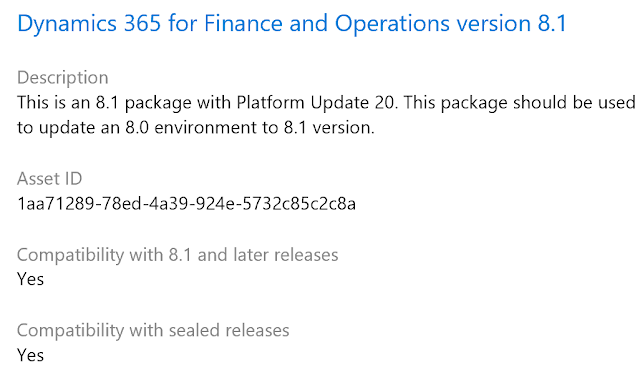

This new feature is very quick and easy to setup, and is something everyone should adopt sooner than later. It shaves off the time spent downloading the complete build artifact somewhere and then uploading it to the Dynamics Lifecycle Services Project Asset Library. After a successful build of the full application you will get the package automatically "released" and uploaded to the asset library.

We expect more "tasks" to be added, allowing us to setup a release pipeline that also let us automatically install a successful build on a designated target environment. So getting this setup now, and have the connection working, will set the stage for adding the next features as they are announced.

Here are some of the requirements and considerations while you set this up:

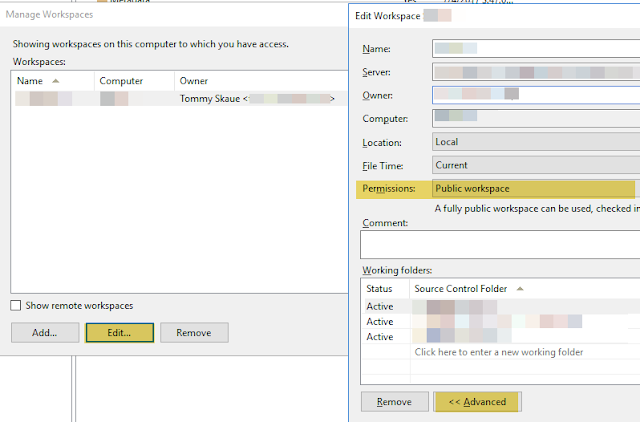

- You need to register an Azure Application of type Native (public, multi-tenant). While it is said you can use the preview experience to register the app in the Azure Portal, I had to use the "non-preview" experience, to ensure I got a correct Native Azure app registration, and not a Web app. While you can add the necessary permissions setup (user_impersonation), you need to run the admin consent for the permissions to work. If you are setting it up, and you are not Global Admin or Application Admin, then you will need to get someone else with necessary permissions to run the admin consent part.

- The connection also requires user credentials as part of the setup. This should not be a just any user, if you think about it. You don't want the connection to break just because the password was changed, or the user was disabled. Also multi-factor (or two-factor) authentication will not work here. So you might want to create yourself a dedicated user for this connection. The user does not need any licenses, just a normal cloud user you have setup and logged on with at least once. Also the user needs to be added to the Lifecycle Services project with at least Team member permissions (access to upload to Asset Library). Log on to LCS with the user once and verify access.

- When you create the release pipeline for the first time, you will need to install the Azure DevOps task extension. Search for "Dynamics" and you should find the "Dynamics 365 Unified Operations Tools". If you are doing the setup with a user that has access to many different Azure DevOps organizations (ie. you're the partner developer doing this cross multiple customers), you will need to make sure you install it on the correct Azure DevOps Organization. When it is installed, you will have to refresh the task selection to see the new available task(s), including the new task named "Dynamics Lifecycle Services (LCS) Asset Upload".

- When configuring the task, you will want to use variables to replace parts of the strings, like the name of the asset, the description, and so on. When you run a release, one of the steps actually list out all the available variables, though with a slightly different syntax. Have a look at the list of available variables on this article, plus the top on how to see the values they are populated with upon a run.

If you already have a successful build ready, go ahead and setup the release pipeline and run it once to see it succeed - or fail. If it fails while trying to connect, it could be one of the following errors:

- AADSTS65001: The user or administrator has not consented to use the application with ID '***' named 'NAME_OF_APP'. Send an interactive authorization request for this user and resource.

Here you have not successfully run the admin consent. Someone with proper permissions needs to run the "Grant permissions" in the Azure Portal. - AADSTS50076: Due to a configuration change made by your administrator, or because you moved to a new location, you must use multi-factor authentication to access.

This is most likely because the user credentials used on the connection is secured with multi-factor authentication. Either use a different account without MFA, or disable it. Most likely it is on for the account for a reason.

I would strongly encourage everyone to set this up, and feel free to use the community forums, Twitter or ask on the comments section, if you run into issues.